|

6/1/2023 0 Comments Highest quality arduino camera

In some cases this can make the code less readable - but the beauty of an Arduino library is that this can be abstracted (hidden) from user sketch code beneath the cleaner library function APIs.

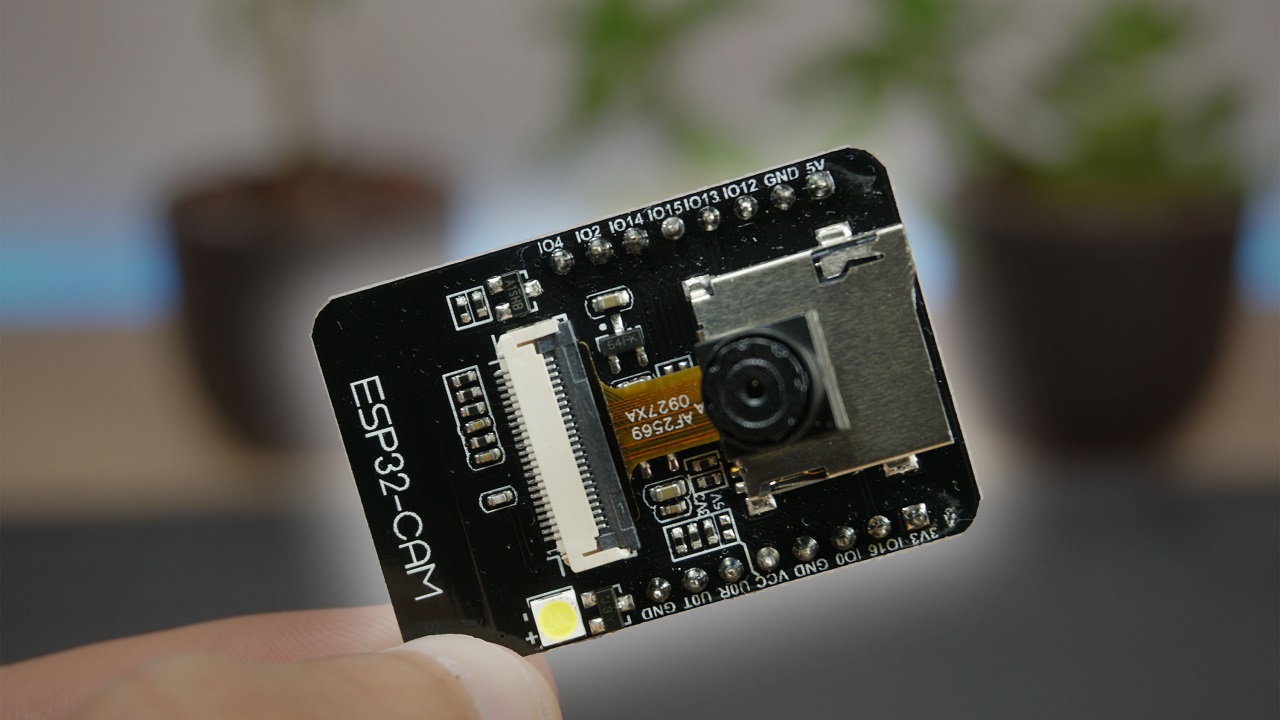

Sometimes it is necessary to restructure algorithms, pay attention to compiler behavior, or even analyze timing of machine code instructions in order to squeeze the most out of a microcontroller. However, embedded systems have constrained resources, and when applications demand more performance, some trade-offs might have to be made. Being readable and optimized don’t necessarily have to be mutually exclusive. In fact there are very good reasons to prioritize readable, maintainable code. It’s rarely practical or necessary to optimize every line of code you write. Let’s have a look at how Larry approached the camera library optimization and how some of these techniques can apply to your Arduino code in general. Larry’s work got the camera image read down from 1500ms to just 393ms for a QCIF (176×144 pixel) image. This is a simplified example of how two of the CMSIS-NN optimization techniques are used.įigure 3: Performance with CMSIS-NN and camera library optimizationsįor this, we enlisted the help of Larry Bank who specializes in embedded software optimization. _SMLAD performs two multiply and accumulate in one cycle.However, using loop unrolling and SIMD instructions, the loop will end up looking like this: a_operand = a | a << 16 // put a, a into one variableī_operand = b | b << 16 // vice versa for b With regular C, the code would look something like this: for(i=0 i<2 ++i) These techniques combined will give us the following example where the SIMD instruction, SMLAD (Signed Multiply with Addition), is used together with loop unrolling to perform a matrix multiplication y=a*b, where a=Ī, b are 8-bit values and y is a 32-bit value. Another optimization technique used by the CMSIS-NN library is loop unrolling. That will enable the optimized kernels to perform multiple operations in one cycle using SIMD (Single Instruction Multiple Data) instructions. The Arduino Nano 33 BLE board is powered by Arm Cortex-M4, which supports DSP extensions. The library utilizes the processor’s capabilities, such as DSP and M-Profile Vector ( MVE) extensions, to enable the best possible performance. The CMSIS-NN library provides optimized neural network kernel implementations for all Arm’s Cortex-M processors, ranging from Cortex-M0 to Cortex-M55. The only difference you should see is that it runs a lot faster! By selecting the person_detection example in the Arduino_TensorFlowLite library, you are automatically including CMSIS-NN underneath and benefitting from these optimizations. Recent optimizations done by Google and Arm to the CMSIS-NN library also improved the TensorFlow Lite Micro inference speed by over 16x, and as a consequence bringing down inference time from 19 seconds to just 1.2 seconds on the Arduino Nano 33 BLE boards. Combined with an OV767x module makes a low-cost machine vision solution for lower frame-rate applications like the person detection example in TensorFlow Lite Micro. There are higher performance industrial-targeted options like the Arduino Portenta available for machine vision, but the Arduino Nano 33 BLE has sufficient performance with TensorFlow Lite Micro support ready in the Arduino IDE. The rest of this article is going to look at some of the lower level optimization work that made this all possible. If you just want to try this and run machine learning on Arduino, you can skip to the project tutorial. Arduino recently announced an update to the Arduino_OV767x camera library that makes it possible to run machine vision using TensorFlow Lite Micro on your Arduino Nano 33 BLE board.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed